Sahana Vijaya Prasad

April 14, 2026

8 min read

April 14, 2026

8 min read

Cut code review time & bugs by 50%

Most installed AI app on GitHub and GitLab

Free 14-day trial

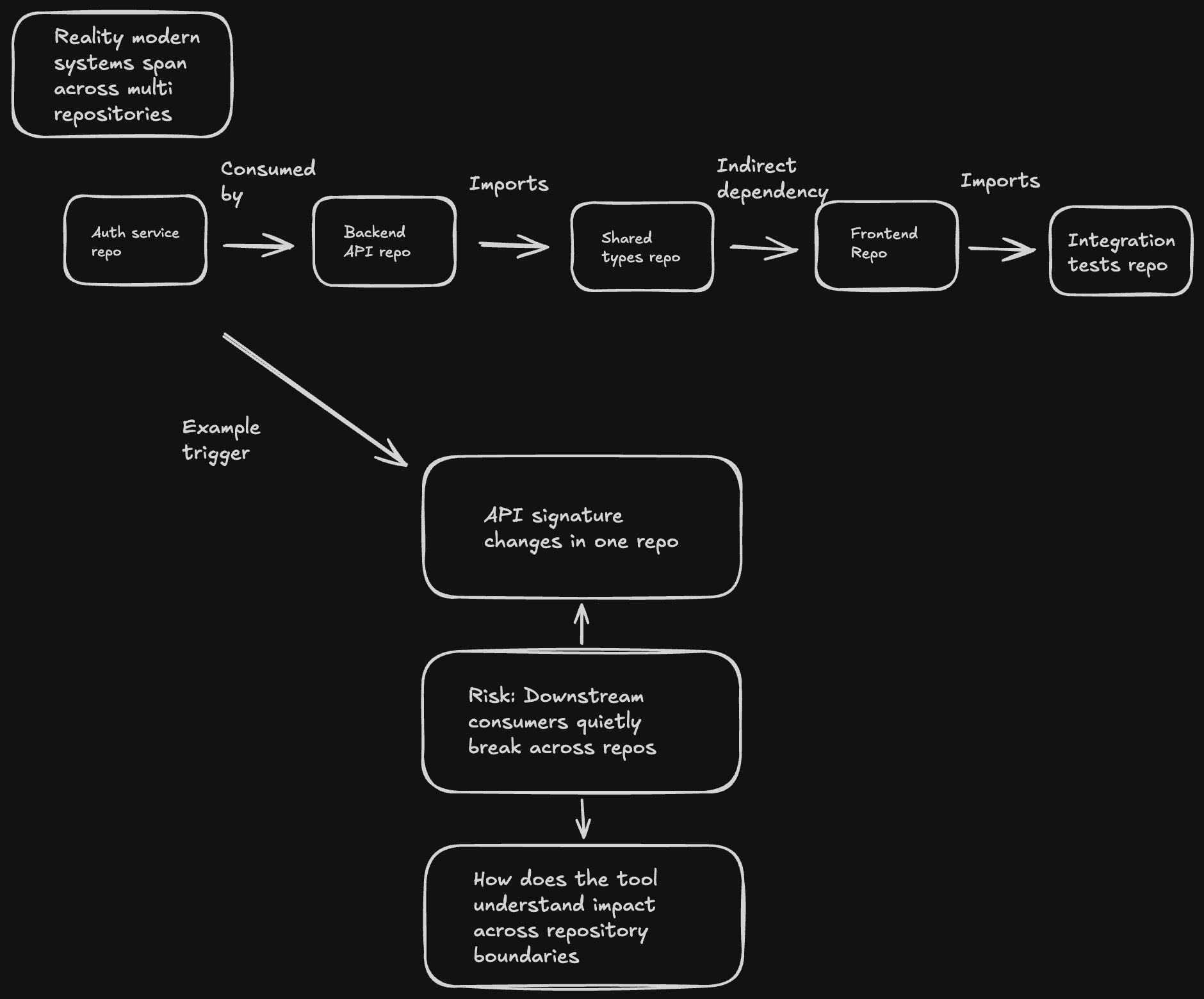

Software development today is rarely limited to a single repository. A complex system might involve a microservices backend, a shared type library, a frontend application, and an integration test suite, all living in separate repositories.

Because of this, changing an API signature in one repository can quietly break consumers in several others.

Figure 1: Modern systems span multiple repositories — a change in one can silently break others

Traditional code review tools treat each pull request as an isolated unit. When a reviewer catches a cross-repo breaking change, it usually happens because they already understand the system, not because the tooling surfaced it.

The real question for engineering leaders evaluating code review tools is simple: how does the tool understand impact across repository boundaries?

The answer exposes a fundamental architectural divide between tools that rely on pre-built vector indexes and tools that actively explore your code at review time.

Before explaining why agentic systems win for cross-repo analysis, it’s worth being direct: CodeRabbit has been building and running this kind of agent-based validation loop since 2024, before this architectural pattern became industry consensus.

The approach wasn’t inspired by Anthropic’s “Building Effective Agents” guide or Google Cloud’s writings on Agentic RAG. Those publications validated what we had already learned in practice: that code review across repository boundaries is fundamentally an investigation problem, not a retrieval problem. You can’t pre-index your way to the right answer when you don’t know in advance which files matter.

Here’s a concrete example of the kind of validation script our agent generates when reviewing cross-repo impact:

// Agent-generated validation: UserService.createUser signature change

// PR: auth-service \#1423 — adds required roleId parameter

const impactedCallSites \= \[

{

repo: "org/backend-api",

file: "src/controllers/admin.ts",

line: 45,

currentCall: "userService.createUser(email, name)",

issue: "Missing required roleId argument — will throw at runtime",

severity: "breaking"

},

{

repo: "org/backend-api",

file: "src/controllers/onboarding.ts",

line: 112,

currentCall: "createUser({ ...userPayload })",

issue: "Spread object may not include roleId — needs verification",

severity: "warning"

},

{

repo: "org/integration-tests",

file: "tests/fixtures/user-factory.ts",

line: 23,

currentCall: "UserService.createUser(email, name)",

issue: "Test fixture calls old signature — will fail in CI",

severity: "breaking"

}

\];

This is what the agent produces: precise, file-level findings grounded in live code, not a list of semantically similar snippets. The rest of this post explains why that difference is architectural, and why tools that still rely solely on RAG pipelines can’t replicate it.

The dominant pattern follows the RAG pipeline:

This approach is well-understood and broadly adopted. Forrester’s analysis confirmed RAG as the default architecture for enterprise knowledge assistants. But research has identified structural weaknesses that are particularly acute when the task is code review across repositories — a domain where precision matters and false confidence is dangerous.

When the initial search misses the relevant code, due to semantic mismatch, poor chunking that splits a function across two fragments, or because the relationship is structural rather than textual, the system has no recovery mechanism.

For code review, this means: if the vector search doesn’t find the downstream consumer of the API you just changed, the tool won’t tell you it exists. No second chance, no alternative strategy.

Industry data underscores the severity: NVIDIA’s technical blog reports that standard RAG “retrieves once and generates once, searching a vector database, grabbing the top-K chunks, and hoping the answer is in those chunks.” When that single shot misses, the entire review is compromised.

Modern vector databases have significantly reduced raw indexing latency, with many now offering updates in mere seconds. But “fresh” infrastructure doesn’t guarantee correct or complete context. RAG pipelines still depend on multiple steps: detecting changes, re-chunking files, recomputing embeddings, and updating indexes. In multi-repository systems, this compounds:

The consequence of relying on incomplete or inconsistent analysis in code review is often false confidence. Agentic systems circumvent this risk by analyzing the code live at the time of review.

A common problem in code analysis is that semantically similar retrieved information often lacks true relevance, contaminating the AI's reasoning. Anthropic’s engineering team has documented this as “context rot.” In code review, this manifests as confident-sounding analysis grounded in the wrong code which is arguably worse than no analysis at all.

Code relationships are fundamentally structural, not semantic. For instance, a function call, an import statement, or a reference to a protobuf schema represents a graph relationship, a structure that similarity search methods struggle to identify. If a shared type definition is modified, the critical factor is identifying the code that imports it, rather than finding code chunks that are merely textually similar.

A vector search can find code that looks like the code you changed. It cannot determine that src/controllers/admin.ts:45 calls userService.createUser(email, name) with two arguments while your PR changes the signature to require three. That requires reading the code, understanding the call site, and reasoning about the mismatch.

Anthropic drew the clearest line in their influential “Building Effective Agents” guide: Cross-repository impact analysis is precisely described by the requirement for agents because "it's difficult or impossible to predict the required number of steps."

OpenAI released the Agents SDK in March 2025 for scenarios where teams shifted “from prompting step-by-step to delegating work to agents.”

Google Cloud stated it most directly: “The most powerful approach to grounding is Agentic RAG, where the agent is no longer a passive recipient of information but an active, reasoning participant in the retrieval process itself.”

These publications reflect where the industry is converging. They also describe exactly what CodeRabbit has been doing since 2024.

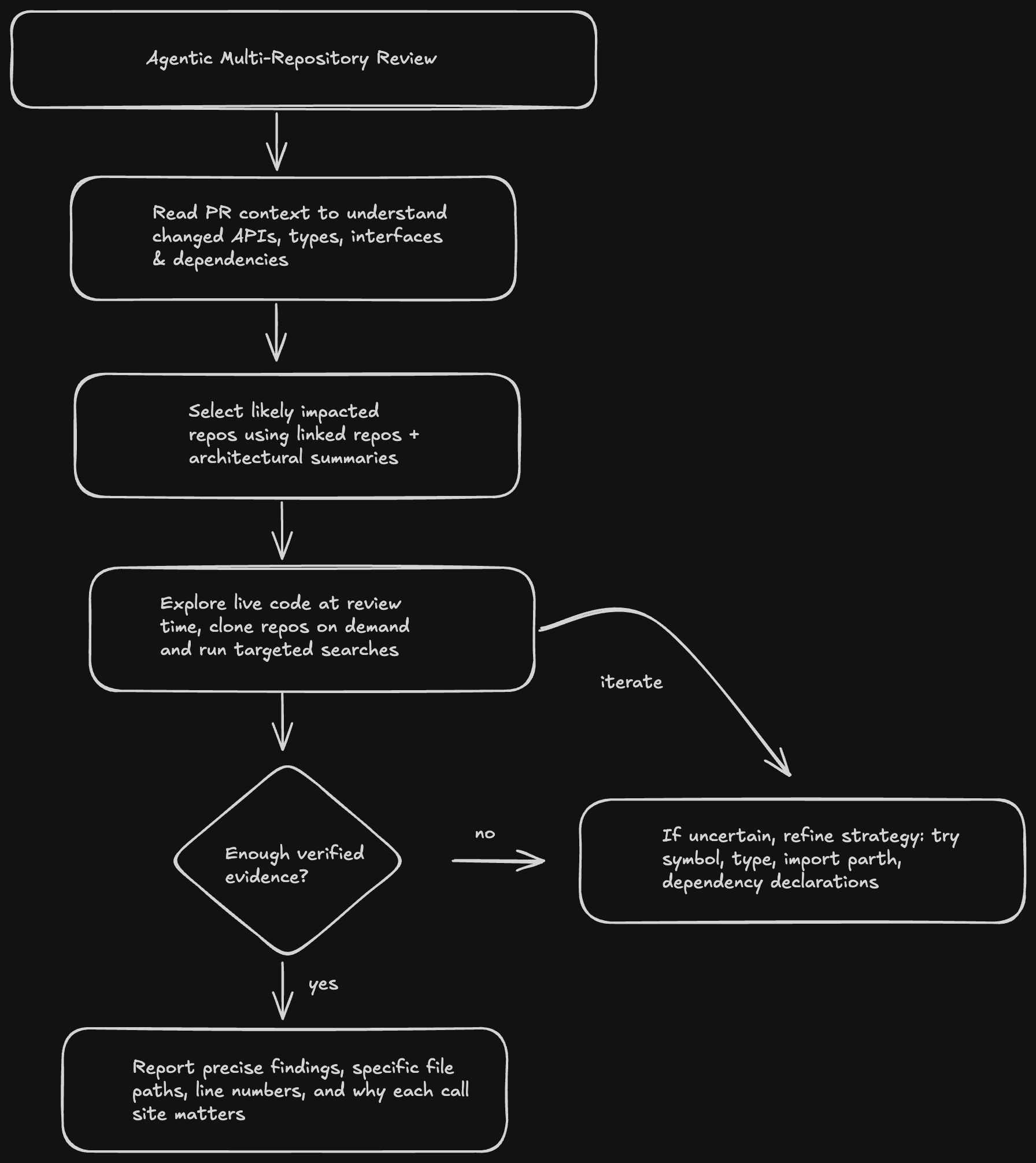

CodeRabbit’s multi-repository analysis embodies the agentic architecture. Rather than pre-indexing code into static representations and hoping the right chunks surface at query time, CodeRabbit deploys an autonomous research agent that actively explores linked repositories in real time.

Configuration is simple. Teams declare which repositories are related:

```

knowledge_base:

linked_repositories:

- repository: "org/backend-api"

instructions: "Contains REST API consumers of shared types"

- repository: "org/integration-tests"

instructions: "End-to-end test fixtures"

When a PR is opened, the agent executes a multi-step research strategy:

Figure 2: CodeRabbit’s agentic review flow — iterates until it has verified evidence

Consider a Pull Request that modifies the UserService.createUser method signature in the auth-service repository, introducing a mandatory roleId parameter. While a RAG-based tool can identify code fragments containing the string "createUser," it lacks the capability to determine if these call sites will actually fail due to the signature change.

backend-api (org/backend-api)

integration-tests (org/integration-tests)

The difference is not incremental. It is the difference between “here are some similar code chunks” and “here are the three call sites that will break, with file paths and line numbers.”

| Dimension | RAG-based review tools | CodeRabbit (Agentic) |

| Data freshness | Reflects last index build (hours to days old) | Live code at HEAD, always current |

| Recovery from missed results | None, single-shot retrieval with no fallback | Agent iterates: tries alternative searches, follows references, reads files to verify |

| Understanding code relationships | Textual similarity only cannot follow imports, call graphs, or type hierarchies | Navigates code structurally greps for imports, reads call sites, follows type definitions |

| Reasoning about impact | Returns similar chunks; cannot reason about whether a call site will break | Reads code, counts arguments, checks type compatibility reasons about actual impact |

| Handling ambiguity | Returns top-k results regardless of confidence | Agent reflects on result quality, runs refined searches when uncertain, stops when self-contained |

| Precision of findings | Code chunks (often partial, sometimes irrelevant) | Specific files, line numbers, and explanations of why the finding matters |

| Security model | Requires persistent index of your code in external services | On-demand cloning into isolated sandboxes; no persistent code storage |

Major industry players like Anthropic, OpenAI, Google, and Microsoft are unanimously investing heavily in agentic infrastructure, including MCP, Agents SDK, Agent Development Kit, and the A2A Protocol. This significant consensus signals a clear future for AI-powered tooling: autonomous, reasoning systems are poised to replace static retrieval pipelines.

Cross-repository code review requires:

Retrieval-Augmented Generation (RAG) is fundamentally mismatched for multi-repository code review. RAG excels at question-answering by grounding LLMs in a knowledge base, but analyzing code across repositories demands an investigative approach, not mere knowledge retrieval.

CodeRabbit’s choice to use an agentic architecture for cross-repository impact analysis isn’t a response to industry trends. It’s what we built because it’s the only architecture that actually solves the problem. The industry is catching up to where we’ve been since 2024.

Want to see CodeRabbit’s cross-repository analysis in action? Try it for free on your next PR.