Harjot Gill

April 22, 2026

12 min read

April 22, 2026

12 min read

Cut code review time & bugs by 50%

Most installed AI app on GitHub and GitLab

Free 14-day trial

> Schedule disasters and system bugs arise because the left hand doesn't know what the right hand is doing – Fred Brooks in The Mythical Man-Month describing the IBM OS/360 Project’s coordination failures that nearly sank it.

The Mythical Man-Month remains one of the most popular books in engineering management and a staple on leaders’ shelves.

Fifty years of SDLC evolution has been one long argument against that failure mode. Agile placed developers in the same room as the product. DevOps tore down the wall between dev and ops. Git and pull requests gave teams a shared record of what changed and why. CI made the build everyone's problem. Platform teams built paved roads so tribal knowledge wouldn't have to be re-earned by every new hire. Every major shift was a bet on shared understanding beating individual heroics.

Then coding agents arrived, and we regressed.

Every engineer now runs a private agent, on their own machine, in sessions nobody else can see. The agent starts each morning knowing nothing. Whatever reasoning was assembled yesterday, whatever alternatives were weighed, context loaded, decisions made in standups, are gone. The process starts to break, creating a false equivalence, “We are shipping faster than ever!”

Brooks was describing human teams drifting apart over weeks. We're now adding AI teammates who drift apart within a single workday, and calling it high productivity.

A new hire at least retains what they learned yesterday.

Recently, I watched a senior engineer spend 11 minutes setting up context before his coding agent wrote a single line. He described the architecture, and explained why they use Postgres and something else. Finally, he pasted three files, a Linear ticket, and a Slack message of context from his tech lead about a service being deprecated.

The agent wrote some code, pretty good code, actually.

The senior engineer opined in the end, the next morning, that he would open a new session and probably do the whole thing again for a different context, from scratch. The agent lacks the team level insight.

This is AI-assisted development in 2026.

Nobody is talking about what the agent knows before it starts working.

Right framing of a problem makes an average thinker perform like a brilliant one, at least for humans. For AI agents, the gap is more substantial. The same model, given the same prompt, will produce wildly different code depending on whether it understands the system it's working in.

Give an agent your codebase with no context and you get plausible-looking code that misses every convention your team spent two years establishing. Give the same agent your codebase plus your architectural decisions, ticket history, on-call runbooks, and the Slack thread where your team decided to deprecate the old auth service, and you get code that looks like a teammate wrote it.

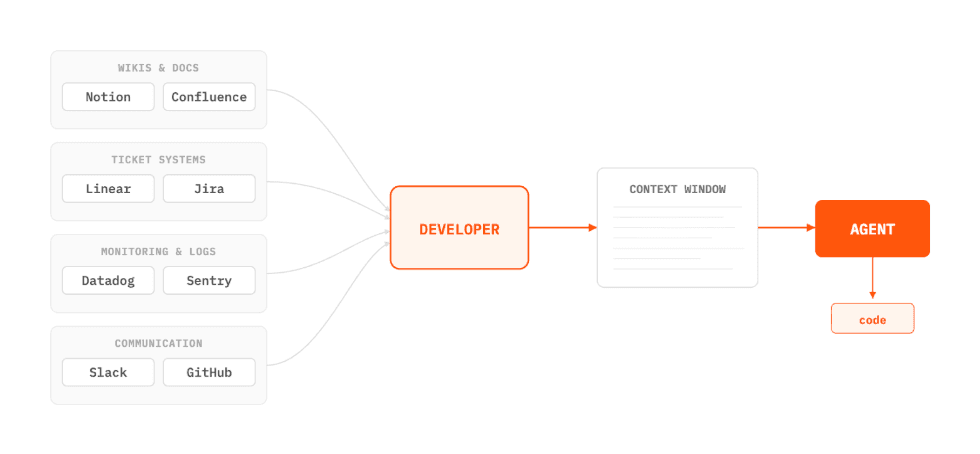

Right now, developers are the ones manually assembling that context every single session, acting as interpreters between their own engineering org and a tool that should already know.

The context problem is the most visible symptom, but the disease is more systemic. There are at least five ways the current generation of agents forget:

Every session starts cold with no context on systems and choices. The agent opens a file and has no idea why your team chose Postgres over Redis for queues, which auth service is about to be deprecated, or that you decided last quarter to let errors bubble up unwrapped. However, that context exists in a PRD nobody reads, a Slack thread from March, and a senior engineer's head. The first thing a developer does every morning is translate it into a prompt.

Again, the dev is reconstructing information that already exists somewhere else in your company, and is adding it into a context window that is limited to a personal session.

The agent you taught yesterday isn't the agent you're using today. You walked it through the billing module: the retry logic that looks wrong but isn't, why you can't trust Stripe's idempotency key on webhooks, and the failed refactor from last summer that's still in the git history as a warning. It produced good code.

You closed the tab. What's left is a commit. The reasoning that led to it (the part you'd actually want to keep) went away with the session. Open the same file next week, and you'll do it all again.

PS: Yes, you are storing/sharing in agents.md or another durable knowledge base. But is that continuously updated enough to gather crucial team context to make it easily maintainable for the team's day-to-day work?

Coding agents are single player. One developer, one session, one machine. The work is invisible to everyone else on the team. If you need to hand off something, your teammate starts from zero because there's nothing to hand off to.

We spent a decade making development collaborative: Git, pull requests, shared CI, and dashboards that anyone can open. Then we built AI dev tools that made development siloed!

The agent writes a piece of code, makes it to PR, and goes through code review. Next sprint, someone rewrites half of that code and a month later there is a bug in the code that intermittently brings the production level down. Engineers then collaborate on Slack or GitHub to fix the regression.

Your agent is out of the loop on all these decisions.

Engineers get better by shipping code that gets reviewed, deployed, broken, and fixed. At each stage, there are collective learnings for a team and individual. If you remove the agent from that loop, you are left with an agent that generates plausible code indefinitely, without ever learning which forms of “plausible” actually survive contact with production.

Okay! We are not gonna get past every developer running a local AI coding agent whether it is in CLI, IDE or other new form factors (ADE?!) . However, the engineering org has no shared view of what and how agents are performing across the team. Which systems are they touching? How are they spending?

The economics will force the question soon enough. Uber's CTO told The Information this year that his team had already burned through its entire 2026 AI coding budget.

Agent amnesia is essentially becoming an economics question. Token spend multiplied by each engineer and compounded daily is a harder problem for engineering teams to solve because they can’t walk away from the productivity gains that got them here in the first place.

For you. Individually. On your machine. Yes.

I'm not arguing that terminal agents don't work. They clearly do. Claude Code, Codex, they're great tools. And, we're not trying to replace them.

In fact, features like steering control, sub-agent framework, hooks, and plugins are all immensely useful for a developer, helping them produce more code than ever!

To be clear, these tools do have memory. Claude Code reads a project-level CLAUDE.md, a user-level one, and persists notes in a local memory directory that survive across sessions.

It's the durable team knowledge that is lost in local agentic coding sessions. The senior engineer's hard-won understanding of the billing module lives in her markdown files, on her laptop. When a colleague across the geo opens their first session, none of it carries across or persists.

Edsger W. Dijkstra wrote that the competent programmer is fully aware of the strictly limited size of his own skull. Agents don't have skulls. They don't have to forget. We made them forget because we modeled them on ephemeral terminal sessions instead of durable environments where teams actually work.

Here's what the next generation looks like, once we stop treating amnesia as a given:

Knowledge should compound. Instead of a context file lingering on one developer’s machine, every code review, resolved ticket, and architectural discussion should feed a layer that makes the agent more useful next month than it is today. This is a shared knowledge layer, scoped by team, project and domain. When a new engineer joins, the agent already carries the institutional memory that probably lived in a few senior engineers' heads.

Work should persist and be resumable. Start a task in a Slack thread. Get interrupted. Hand it to a teammate. Come back two days later, and the work is still there. It’s visible, commentable, and resumable by anyone with the right access. It’s not trapped in a browser tab on one developer’s laptop.

The agent should be governed like any other system with production access. Most coding agents today are priced per seat and scoped per user. Your spend is whatever your developers happen to burn. Your access controls are whatever the tool defaults to, which is usually "everything." Your visibility into what the agent is actually doing across your org is approximately zero. You wouldn't give every engineer root access to prod. There's no reason to give an AI agent flat access to your entire org's context, either.

Context should be auto-assembled from everywhere, not pasted in by hand. The agent should pull from your codebase, tickets, docs, observability stack, cloud infrastructure, and the knowledge your team has built up over time. The developer's job is to direct the agent, not to be its research assistant.

The No. 1 complaint about coding agents is that they generate throwaway code. Too much rework and too many iterations before something is actually mergeable. Better context on the first pass, however, means better code on the first pass.

Today, we are introducing CodeRabbit Agent for Slack.

We're starting with the core loop: planning, code generation, review, investigation, and knowledge-augmented development.One agent for your entire Software Development Lifecycle. It’s all inside Slack, synchronous, and having guardrails in place.

But the vision is bigger than a coding agent in Slack.

What we're building toward is an agentic layer across the entire SDLC, a layer that understands systems, connects tools, retains the team's knowledge, and executes engineering workflows end-to-end.

We believe the agent is only as useful as the context it can reach and the actions it can take.

The endgame is the layer that connects the stack and makes it programmable from the place where your team already works.

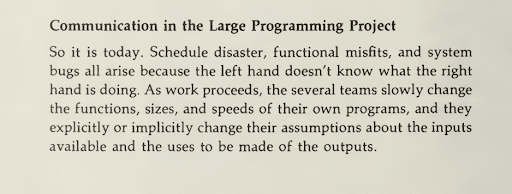

Individual productivity is up across every engineering team adopting AI. Team-level productivity is still stuck, and it's stuck for three reasons that have nothing to do with model quality: there's no explainable record of what the agent actually did, no cost attribution that matches how teams are organized, and the agent doesn't live where engineering actually happens. Solve those, and team productivity starts to compound the way individual productivity already has.

The agents that win won't be the ones with the best model. They'll be the ones that already know your systems when you open them — the conventions, the deprecations, the decisions that didn't make it into a doc. That's durable team knowledge, and it's what's missing from every tool shipping today.

I'm not going to tell you this replaces developers. If you've shipped production code, you know that the hard part of engineering isn't typing. It's judgment. It's understanding the system. It's knowing what to build and what not to build.

At CodeRabbit, this is the bet we've been making for years. Our independent purpose-built context engine, running two million code reviews a week — is how we've encoded what good engineering teams actually do. CodeRabbit Agent extends that engine into Slack with your team's own decisions and patterns on top. Work happens in the open, where teammates can see it, jump in, and pick up where someone left off. Context compounds, rather than dying at the end of a session.

Whoever solves agent amnesia wins. Whoever keeps building faster with amnesiac agents is building a faster version of the wrong thing.

Sources:

Figure 1: Sources: Becker, J., Rush, N., Barnes, E., & Rein, D. Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity. METR, July 10, 2025; Xu, F., Medappa, P. K., Tunc, M. M., Vroegindeweij, M., & Fransoo, J. C. AI-assisted Programming May Decrease the Productivity of Experienced Developers by Increasing Maintenance Burden. arXiv:2510.10165, 2025.