Brandon Gubitosa

April 27, 2026

15 min read

April 27, 2026

15 min read

Cut code review time & bugs by 50%

Most installed AI app on GitHub and GitLab

Free 14-day trial

For most of the last decade, adding AI to your development workflow meant giving developers a better autocomplete. The code still came from a human. The judgment still came from a human. The review process was still built around a human reading through a diff and leaving comments. AI made individual steps faster but didn't change the fundamental shape of how software got built.

That shape is changing now. Engineering teams are deploying coding agents that complete entire workflows autonomously, and the software development lifecycle is reorganizing around them in ways that the tooling, the processes, and in many cases the mental models haven't fully caught up with yet. If you want a grounded account of how that shift happened, A very brief history of AI coding, from Copilot to next-gen agents is a good place to start.

This post is a practical look at what the agentic SDLC actually is, how it differs from AI-assisted development, where it creates new problems, and what a well-structured agentic stack looks like moving forward.

The agentic software development lifecycle (agentic SDLC) is a software delivery practice where AI agents participate meaningfully across the full lifecycle: planning, coding, testing, reviewing, deploying, and operating.

Rather than sitting at a single checkpoint like autocomplete or PR review, agents work alongside humans across the whole workflow, carrying context between stages, taking action on the team's behalf, capturing what the team learns, and staying accountable for what they do.

A simple mental model: an agent takes a ticket, reads the codebase, writes an implementation across multiple files, runs the test suite, iterates on failures, and opens a pull request. The developer sets the intent. The agent handles the execution. The developer reviews the result.

The distinction matters more than it might seem. It determines how teams build review and governance infrastructure.

AI-assisted development means a developer uses AI to move faster through work they're directing: generating a function, explaining an error, suggesting a refactor. The developer stays in the loop at every meaningful decision point.

Agentic development means the AI pursues a goal across multiple steps without a human directing each one. It plans, executes, evaluates its own output, loops, and hands off a completed artifact at the end. The developer sets the intent and reviews the result, but the workflow in between runs autonomously.

AI-assisted autocomplete at a single checkpoint doesn't require organizational trust. An agent running a 15-iteration debugging loop across 40 files does.

The three delivery models are running in parallel across the industry right now. The differences center on who owns execution and where the bottleneck lives.

| Model | Who owns execution | Where the bottleneck lives |

| Traditional SDLC | Humans at every phase | Coding speed and reviewer availability |

| AI-assisted development | Humans direct, AI accelerates | Reviewer availability and context switching |

| Agentic SDLC | Agents execute, humans verify | Verification, review, and governance |

Treating the agentic SDLC as a faster version of what you already have produces a familiar failure mode. AI-generated code arrives faster, in larger batches, and the review process buckles under the volume. DORA's 2024 Accelerate State of DevOps Report calls this the "verification tax": time saved writing code gets re-spent auditing it.

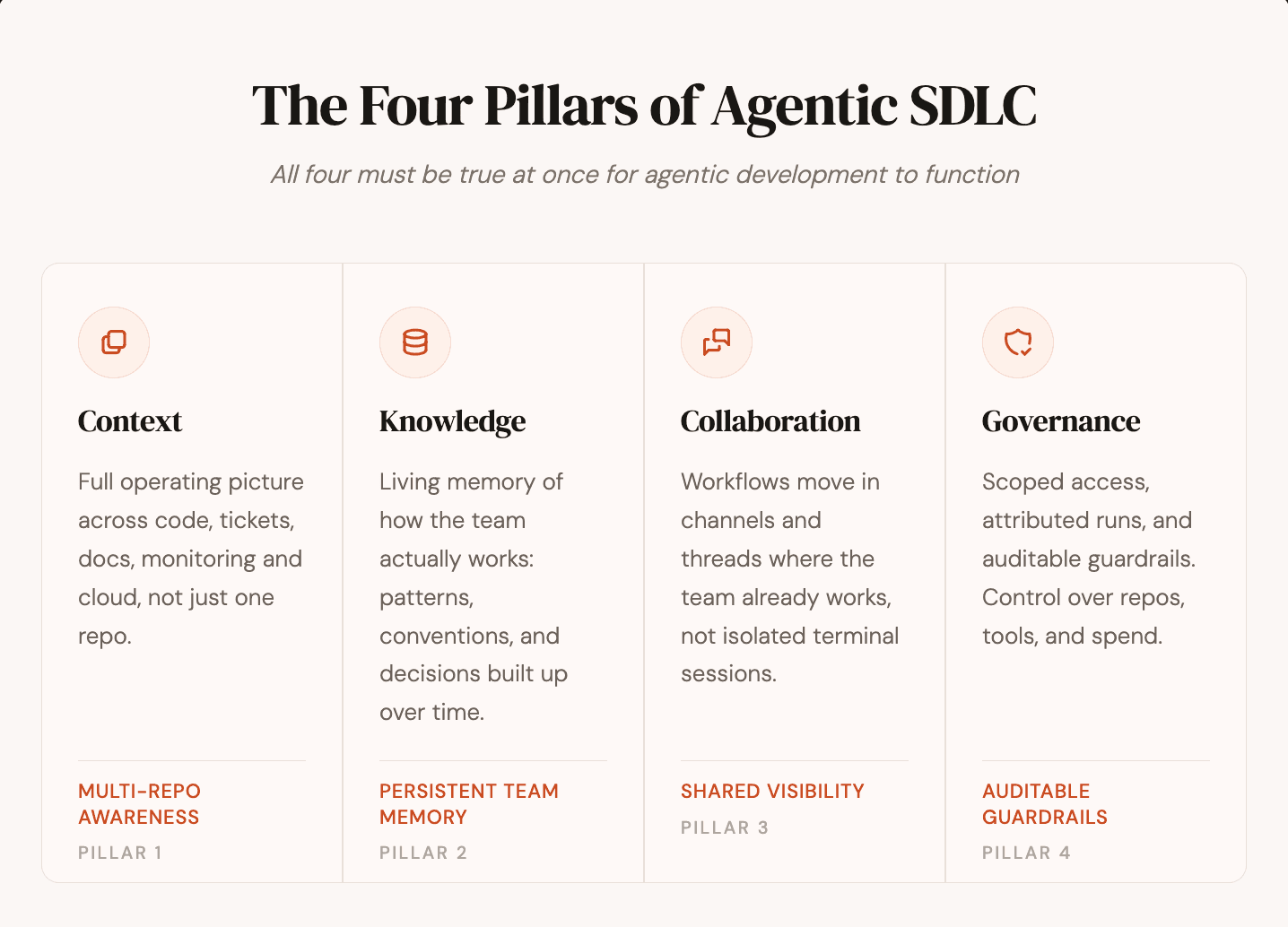

Dropping a coding agent into an existing workflow does not, by itself, create an agentic SDLC. For agents to produce reliable output at enterprise scale, four capabilities usually need to be in place.

Context: The agent needs the organization's operating picture across code, tickets, docs, monitoring systems, and infrastructure context, not just a single repository. Agents that only reason inside one repo miss the rest of the story: the incident thread in Slack, the ticket that explains why the code is structured a certain way, the runbook that defines acceptable behavior.

Knowledge: Starting context is not enough. The agent also needs persistent memory of how the team works: accumulated patterns, conventions, prior decisions, and learned constraints, so it does not restart from zero on every task.

Multi-player collaboration: Software is not built solo. Agentic workflows need to move forward in the shared surfaces where teams already coordinate, such as Slack threads, tickets, and review systems, not only inside isolated terminal sessions that create no team visibility.

Governance: Enterprise teams need scoped access, role-based controls, and auditable guardrails. They need control over which repositories agents can reach, which tools they can invoke, which organization a run belongs to, and how behavior is configured and reviewed.

Without all four, the result is usually not an agentic SDLC. It is isolated coding assistance that may be faster, but is still missing the context, memory, collaboration, and control required for reliable operation at scale.

Agents are running production workflows today across six recognizable patterns, using coding tools like Claude Code, Cursor, Codex, and Gemini. The output is landing directly in codebases and heading toward production:

Debugging workflows: An agent receives a failing test or bug report, reads the stack trace, traces execution, hypothesizes causes, writes and reruns fixes, and iterates until it resolves the issue.

Refactoring workflows: An agent analyzes architectural problems across a codebase, proposes a restructuring, applies changes across dozens of files, and validates that behavior is preserved throughout.

Security scanning workflows: An agent searches for vulnerabilities including hardcoded secrets, unsafe deserialization, and missing input validation, flagging findings with enough context to be actionable rather than just a list of line numbers.

Feature development workflows: An agent takes a ticket or spec, writes the implementation, handles edge cases, adds tests, and opens a pull request covering the full cycle from intent to code.

Incident response workflows: A production alert triggers an agent to root-cause the issue, propose a fix, run regression tests, and surface findings in the same Slack thread where the alert fired, before on-call gets paged.

Documentation workflows: Merged PRs automatically trigger doc updates, keeping endpoints, configs, and changelogs in sync with the code that shipped.

Six different workflows. One pattern underneath: the agent owns execution, the human owns intent and review. Each one used to require a senior engineer's full attention. Now they run continuously, often in parallel, often without anyone watching closely until the PR lands.

The traditional SDLC has well-defined phases: planning, development, testing, review, deployment. The agentic SDLC doesn't replace those phases. It changes who, or what, does the work in each one.

This is where quality gets determined rather than just documented. When agents do the implementation, unclear intent doesn't just slow a developer down. It produces a PR that's technically correct but functionally wrong, and reworking that is expensive.

Teams using coding agents invest more in planning as a result, turning requirements into precise, codebase-grounded specs before any code gets written. CodeRabbit Plan is designed for this moment in the workflow.

This is where agentic systems are furthest along. Coding agents complete full feature workflows, run autonomous debugging loops, and handle refactoring that previously consumed senior engineers for days.

The velocity gains are real and measurable, especially on well-scoped tasks where the intent is precise enough to make the output predictable. What used to require a senior engineer's attention for an afternoon now runs in the background while the engineer works on something else. The constraint is no longer how fast a human can type.

Agents write failing tests that reproduce a bug from a stack trace, then modify application logic until the test passes. Test coverage and regression checks become continuous rather than scheduled.

Coverage gaps surface as soon as they appear, not at the end of a sprint. Agents can run the full test suite on every change, propose new tests where coverage is thin, and flag flaky tests that mask real issues. The shift is from periodic verification to a constant background check on the codebase's behavior.

This is where the verification gap lives. Agents generate code faster than teams can verify it, and the opacity of agentic output makes verification harder than reviewing human-written code. The diff looks like any other diff.

What's underneath is harder to reconstruct: the iterations, the intermediate decisions, the side effects across files. This phase has changed the least in response to agentic development, despite being the most directly affected by it.

Merge and deploy remain largely human-gated, but the boundary is shifting. Production alerts trigger agent-led root-cause analysis. Runbook execution that used to require an on-call engineer now runs through an agent that correlates the alert with recent commits, traces likely causes, and proposes a fix in the same Slack thread.

The workflow doesn't end at merge anymore. It extends into a continuous operational loop where agents participate in observability, incident response, and post-incident review.

Teams have enough velocity, but they lack confidence in what that velocity produces. Agents generate code faster than teams can verify it, and that verification gap creates specific, measurable problems. As we covered in the hidden cost of AI coding agents, the real cost is misalignment that compounds quietly across every stage of the workflow, not tokens or compute.

When a developer writes a PR, a reviewer can ask questions, trace reasoning, and understand the intent behind specific choices. When an agent runs a debugging workflow across 40 files, iterates through 15 passes, and touches 200 lines of code, reconstructing what changed and why requires active forensics rather than just reading a diff.

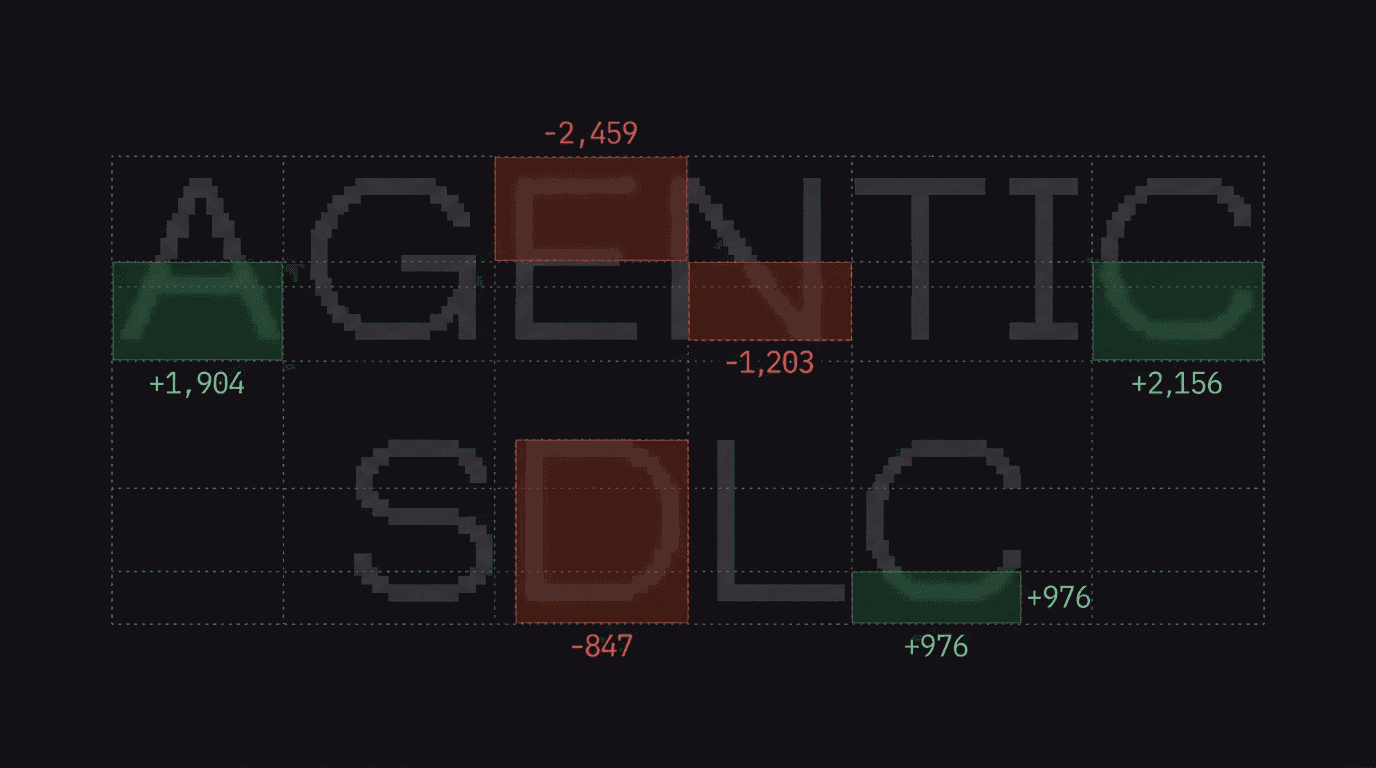

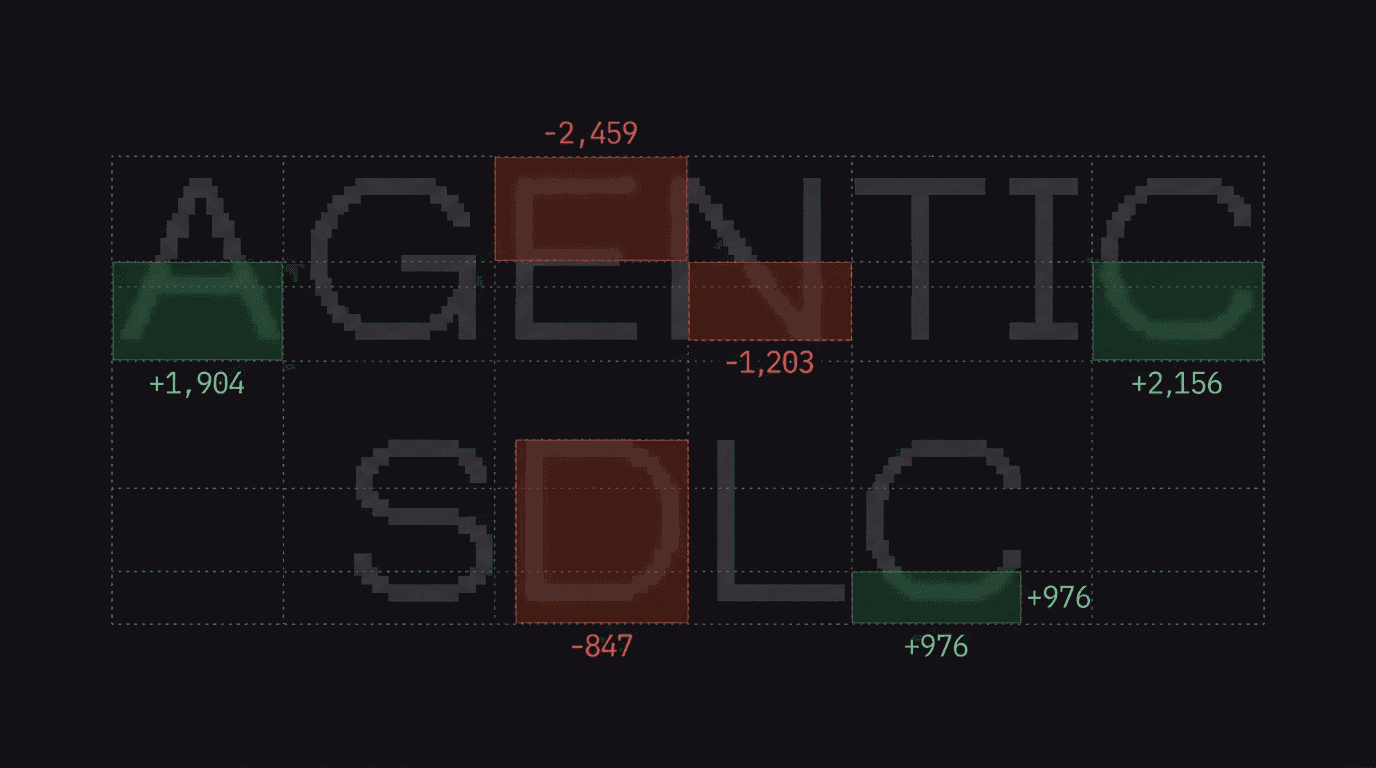

CodeRabbit's State of AI vs Human Code Generation Report puts numbers on the gap. Across 470 open-source GitHub pull requests, AI-co-authored PRs produced 10.83 issues per PR compared with 6.45 for human-only PRs. Readability issues spiked 3.15x. Error-handling issues nearly doubled.

The distribution is heavier-tailed too: at the 90th percentile, AI PRs contained 26 issues versus 12.3 for human PRs. David Loker, the report's author, named the pattern: "AI accelerates output, but it also amplifies certain categories of mistakes."

That data shows up as four specific problems teams are dealing with in practice.

Behavioral drift: An agent's output is individually sensible at each step but collectively introduces subtle changes to how code behaves. Standard tests and surface-level review miss them.

Standards inconsistency: Different agents, running at different times with different context, apply different interpretations of what good code means in a given codebase. Output is technically functional but architecturally inconsistent.

Security opacity: Vulnerabilities introduced by agentic output aren't visible through standard linting or static analysis. They emerge from the interaction between changes rather than from any single flaggable line.

Governance gaps: Engineering leaders have no reliable way to understand what agents shipped, whether it met the team's standards, or where the risk concentrations are across a codebase with many agents running in parallel.

Teams that hit these problems first are already solving for them. The earliest were small engineering teams shipping mostly AI-generated code, where the verification gap got sharp enough to force a fix before it broke the release schedule.

For example, at Mastra, a 16-person team shipping a TypeScript agent framework with over 300,000 weekly downloads, the confidence problem was concrete. The team had no AI review tool they trusted before adopting CodeRabbit.

After switching, engineers resolved 70 to 85% of critical comments before any PR was merged. Follow-up PRs stopped. CTO Abhi Aiyer described the shift: "We just listen to it as if it's always correct. And so far it has been."

Standard automated review tools were designed for a different problem. Linters, static analysis tools, and basic CI checks were built to help human reviewers catch surface-level issues: known patterns, syntax errors, formatting rules.

They weren't built to reason about what an agent was trying to accomplish across a complex multi-file change, whether it drifted from the original intent, or whether the approach aligns with how the codebase handles similar problems elsewhere.

An effective review layer for agentic output has to do four things traditional tooling doesn't.

Codebase context: Understand the codebase deeply enough to evaluate a change against the actual patterns and standards of that specific codebase, not generic best practices. This means analyzing file relationships, code dependencies, past PRs, and linked issues to reconstruct intent rather than just flagging diffs.

Consistent standards: Apply the same bar regardless of where the code came from, so a senior engineer's PR, a junior developer's PR, and an agent's fifth iteration of a refactoring workflow all get reviewed against the same standard.

Generation-speed throughput: Run at the speed of generation rather than the speed of human attention. A review layer that creates a queue is just moving the bottleneck, not solving it.

Actionable specificity: Explain findings with enough detail to be useful. A comment that says "consider refactoring this" doesn't help on an agent-generated PR where the developer needs to decide whether to accept, reject, or modify the output.

CodeRabbit is built for this problem. The context engine pulls in multi-repo context, past PRs, linked issues, and team decisions to review changes against the actual codebase rather than in isolation. Code Guidelines let teams configure the same standards in the review layer that they've set in their coding agents, so the rules stay consistent across generation and review.

At Abnormal AI, a 250-engineer cybersecurity company running an AI-native playbook with Cursor and background agents, the verification problem was the bottleneck. After integrating CodeRabbit, the team accepted 65%+ of critical-severity comments, saved 100+ hours of reviewer time in the last 30 days, and caught a prompt injection vulnerability in an internal chatbot project that humans had missed.

VP of AI Strategy Shrivu Shankar named the structural fit: "CodeRabbit provided a consistent enforcement layer both for AI-generated code and for engineers still writing code manually."

The pattern emerging among teams running agents at scale is consistent.

Agents are used for well-scoped workflows where intent can be expressed precisely. Vague tickets produce vague PRs regardless of how capable the agent is.

Planning gets more investment than it did in a purely human-driven workflow, because the cost of unclear intent is higher when an agent is going to run with it for 15 iterations before anyone reviews the result.

Review is treated as an active layer in the stack, not a legacy process. Automated review runs at the speed of generation. Human review is reserved for the decisions that genuinely require human judgment.

Standards and guidelines are defined once and applied everywhere in the coding agent configuration, in the review layer, and in the CI pipeline, so context switching between tools doesn't mean re-explaining what good code looks like in this codebase.

Governance tooling gives engineering leaders visibility into what agents shipped, where risks concentrate, and whether standards are applied consistently across teams and codebases.

At the scale CodeRabbit operates, the math is clear: 2 million PRs reviewed per week across 15,000+ customers and 3 million repos. Verification is a load-bearing layer in the agentic stack, not a checkbox added at the end.

The generation side of the agentic SDLC will keep moving fast. Agents are getting more capable, the range of workflows they complete autonomously is widening, and the teams adopting them are pulling real velocity gains out of it. The constraint is shifting from speed to quality.

The gap between what agents generate and what teams can confidently verify determines how much of that velocity becomes production-ready software. Closing that gap is the work of the agentic SDLC, and the split is clear:

Agents handle execution: Multi-file changes, test loops, refactors, scaffolding, and the routine workflows where intent can be expressed precisely.

Verification runs at agentic speed: Codebase context, standards enforcement, security and style checks, and the volume that would otherwise queue up against human reviewer attention.

Humans handle judgment: Architecture, design trade-offs, and the system-level decisions where domain context is irreplaceable.

CodeRabbit is built to be the verification layer of the agentic SDLC, and it runs wherever developers work: pull requests, IDEs (VS Code, Cursor, Windsurf), CLI, and code review through Slack. Every PR gets a walkthrough summary and a run of 50+ bundled linters and SAST tools inline before a human reviewer sees it.

Standards enforcement scales with the team. Conventions encoded once into CodeRabbit apply across every PR an agent or engineer opens, which is what makes verification at agentic speed possible without sacrificing what good code looks like in your codebase.

The agentic SDLC works when verification keeps pace with generation. Ready to put it in place? Get a 14-day free trial of CodeRabbit today.